What AI Actually Is: A Plain Guide to the Technology Everyone Is Talking About Right Now

Part 1 of 3 — The AnnariSystems AI Series

Everyone is talking about AI. Your clients are asking about it. Your competitors are claiming it. And somewhere underneath all the noise is a simple question nobody seems to answer cleanly: what is it actually?

Not what it will do in ten years. Not whether it will take jobs. What it is, right now, in 2026, and how it works. That is what this post is about.

The Person Who Read Everything

Imagine someone who spent twenty years doing nothing but reading. Every book, Wikipedia page, forum argument, scientific paper, and blog post they could find. They never went to a classroom. They just read. Billions of pages. And over time they got extraordinarily good at one thing: given any sentence, predict what word comes next.

That person is an LLM , a Large Language Model. It is a program trained on a massive chunk of human writing, and it learned one core skill: predict the next word. Do that word by word, millions of times per second, and you get something that sounds like it is thinking.

Claude, GPT-4, Gemini : these are all LLMs. Big readers who got very good at continuing your sentence.

| The “Large” in LLM refers to how many parameters (internal dials) the model has ,often in the billions. More parameters means more nuance, better at picking the right word for the right context. It is not magic. It is pattern recognition at an almost incomprehensible scale. |

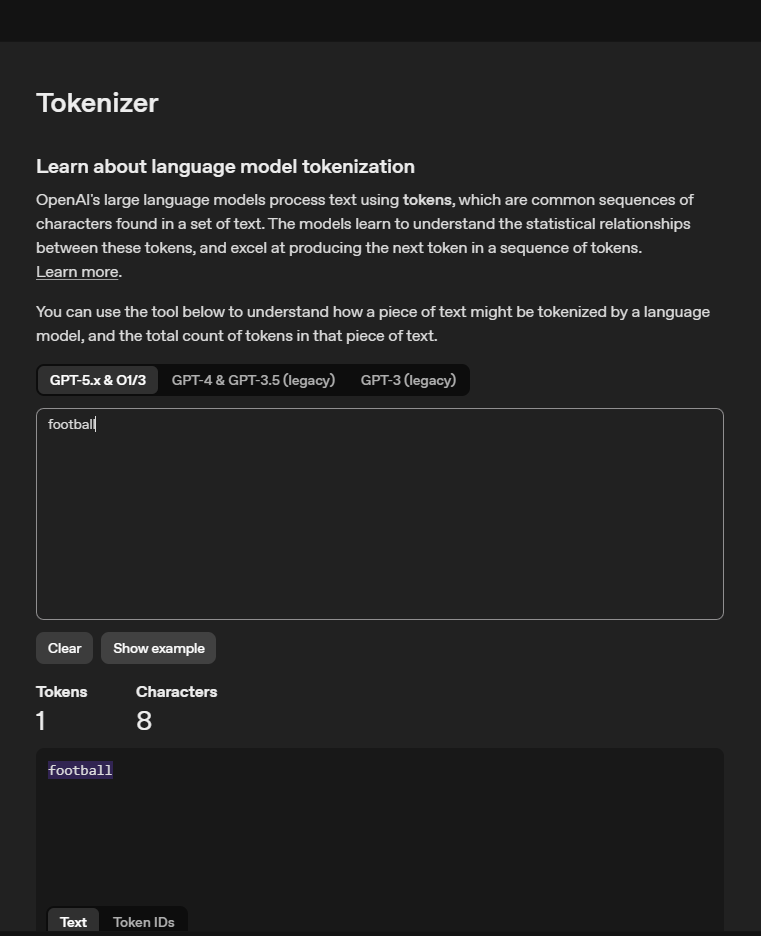

Tokens : The Unit of Work

When you type a message to an AI, it does not read your words the way you do. It breaks them into chunks called tokens. A token is roughly three to four characters , about three quarters of a word on average. The word “football” is one token. A full paragraph might be eighty to a hundred tokens.

A quick note: common words are not cheaper per token,they are just more likely to be a single token rather than broken into pieces."The" is one token.A long technical term you rarely see in English text might be four or five tokens.Frequency during training made it efficient,not free.

This matters because tokens are how AI companies measure and charge for their service. Every token in, every token out , each one is a unit of computation. Each computation has a cost.

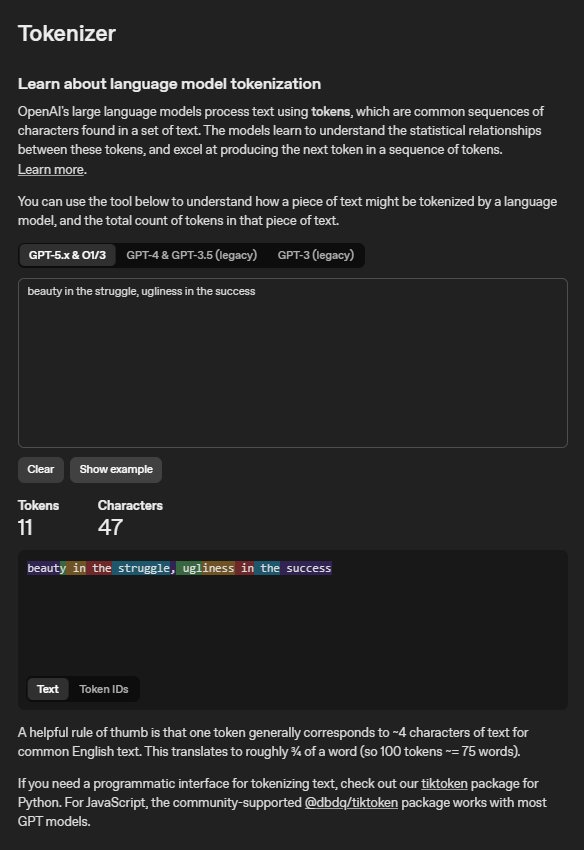

So are tokens just a billing trick?

Not quite. When an LLM processes text, it genuinely converts everything into numerical chunks before it can work with it. That chunking process is called tokenisation, and it is a real, necessary step in how the model functions. Tokens exist because the model needs them to work. That part is not artificial.

But using tokens as the billing unit , that was a choice. AI companies could have billed by word count, character count, or time spent. They chose tokens because tokens map most directly to what actually costs them money: the amount of computation their hardware does.

When you type a message on the free tier, you are not spending anything. Anthropic is. Every time you send a message, their servers ,those thousands of GPUs running in data centres ,spin up and do real work. That work costs real electricity, real hardware, real engineers. Anthropic absorbs that cost for free users up to a point, and the token limit is where they draw the line. Think of it like data on a mobile bundle from MTN or a satellite connection like Starlink , you are not handing them money every time you load a page. You bought a bundle, and each page eats into it. When it runs out, you are cut off until it resets.

More tokens in and out means more calculations, more memory, more electricity. Billing by token is billing by the unit of work ,the same logic as paying for electricity by the kilowatt hour or the same way megabytes measure your data.

https://platform.openai.com/tokenizer

One thing worth knowing: Anthropic, OpenAI, and Google all use slightly different tokenisation systems. The same sentence that costs you twenty tokens with Claude might cost twenty-two with GPT-4. Not standardised. Each company made their own choice.

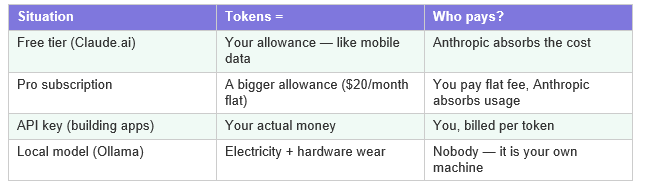

What tokens actually cost you

| Real example: “Hello, can you help me write an article about swimming?” is about 14 tokens in. A 500-word reply is around 650 tokens out. Total: ~664 tokens. At $3 per million tokens (Claude Sonnet’s rate), that exchange costs less than a fraction of a pesewa. But multiply that across thousands of business requests per day , it adds up. |

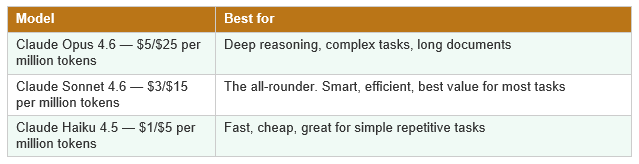

Models : The Different Brains

In football, not every player is built the same. A pacey winger is not suited for holding midfield. A target striker does not play the same role as a false nine. Same sport, different builds, different purposes.

AI models work the same way. A model is a specific trained brain , a particular set of learned weights frozen at a point in time. Different models from the same company are like different signings under the same club philosophy.

OpenAI has GPT-4o, o1, o3. Google has Gemini. Meta has Llama. Anthropic has Claude. Each company trains their own, with different strengths and costs , but they are all doing the same fundamental thing: predicting the next token.

Agents : When the Brain Gets Hands

A brilliant tactical analyst sitting in the stands can see everything. They can tell you what is wrong, what should happen next, what the opposition is doing. But they cannot run out and make the pass themselves. They are observers, not actors.

A plain LLM is that analyst. It can advise, write, plan, explain. But it cannot do. It has no hands. It cannot browse the web, send an email, check your database, or book a meeting. It only exists inside a conversation window.

An agent is what you get when you give that analyst a radio, boots, and permission to step onto the pitch. An agent is an LLM connected to tools , search the web, read a file, call an API, execute code, send a message , and given a goal to complete on its own.

| Example: You tell an agent: “Check our inbox, find all client emails from this week, summarise them, and draft replies.” A plain LLM cannot do that. An agent reads the inbox (tool), processes the emails (brain), writes drafts (brain), and sends them (tool). No hand-holding required. |

Harnesses : A Word That Means Two Things

This is one of those AI terms that gets used differently depending on who you are talking to. Both meanings are real and worth knowing.

- Meaning 1 — The Evaluation Harness

Before a football club signs a player, they run them through proper fitness tests and tactical evaluations. Not “he looks good on the ball” , actual measured, repeatable assessments.

In AI, an evaluation harness is that testing system for models. It is a structured framework that runs a model through thousands of test questions and scores it consistently. When a company says “our model scores 92% on MMLU” ,that number came from an evaluation harness.

| Famous evaluation harnesses: • MMLU , tests knowledge across 57 subjects (law, history, maths, medicine) • HumanEval ,tests ability to write working code • HELM , covers accuracy, fairness, and efficiency One caveat: a model can be optimised to score well on tests without being genuinely better at real tasks. Harness scores are useful signals, not the full picture. |

- Meaning 2 — The Agent Harness

This second meaning matters more for practical deployment. An agent harness is the operational infrastructure that wraps around an LLM to make it reliable in production.

The simplest way to understand it: the model is the brain, but a brain alone cannot do anything useful in the real world. It needs an operating system. The agent harness is that operating system.

| The computing analogy: • The Model = the CPU (cognitive processing power) • The Context Window = RAM (limited, volatile working memory) • The Agent Harness = the Operating System (manages tools, memory, execution) • The Agent = the Application running on top The harness does not reason. It executes. |

Local vs Cloud : The Coaching Setup

Two types of coaching arrangements. First: you call a world-class academy. They analyse your team remotely and send recommendations. Fast, expert , but dependent on their availability, their pricing, and they hear everything you tell them.

Second: you hire a coach who moves into your training facility. On-site. No phone connection needed. Nothing discussed leaves the compound. More work to set up , but once they are there, they are entirely yours.

That is cloud vs local. A cloud model (Claude, GPT-4, Gemini) runs on a company’s servers. Fast, powerful, always current , but your data leaves your machine. A local model runs entirely on your own hardware. Nothing leaves. No subscription. No internet required after the initial download.

| When local makes sense: • Client data that must stay confidential (medical, legal, financial) • Areas with unreliable internet , very relevant in Ghana • High-volume tasks where cloud costs become painful When cloud makes sense: • You need maximum model intelligence • You are prototyping and want fast setup • You need the model’s knowledge to stay current |

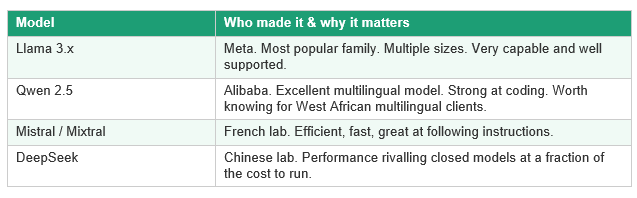

Open Source Models : The Open Training Facility

A closed training facility has great coaches but you never see the playbook. An open facility publishes its entire methodology , the drills, tactics, scouting reports. Anyone can study it, copy it, build their own version.

Open source AI models are the second type. The weights , the trained brain , are publicly released. Anyone can download, run, modify, and build products on top of them for free.

API Key vs Subscription : Two Very Different Deals

Two ways to access a stadium. First: a season ticket. Flat fee, show your card, walk in for every match. You do not think about cost per game.

Second: you are a TV broadcaster. Every time you want footage, you pay per minute. No flat fee. The more you use, the more you pay. But you are building something , packaging the content for others.

A subscription (Claude.ai Pro, ChatGPT Plus) is the season ticket. Fixed monthly fee. Built for individual use. An API key is the broadcaster deal. Pay per token consumed. Built for developers building products that use AI under the hood.

Ready to explore what AI can do for your organisation?

Contact AnnariSystems today. Whether you want to understand your options, run a private local AI demo, or just have an honest conversation about what is realistic for your sector — we are the right starting point.

📧 Email: info@annarisystems.com

💬 WhatsApp: +233 (545) 702-789

📍 AnnariSystems — practical AI for Global businesses, on your terms..